Augmented Reality Does Two Things

Lewey Geselowitz, 6/15/2018

article on Facebook / Linked-In / Medium

next in series: AR Contextual Messaging UX

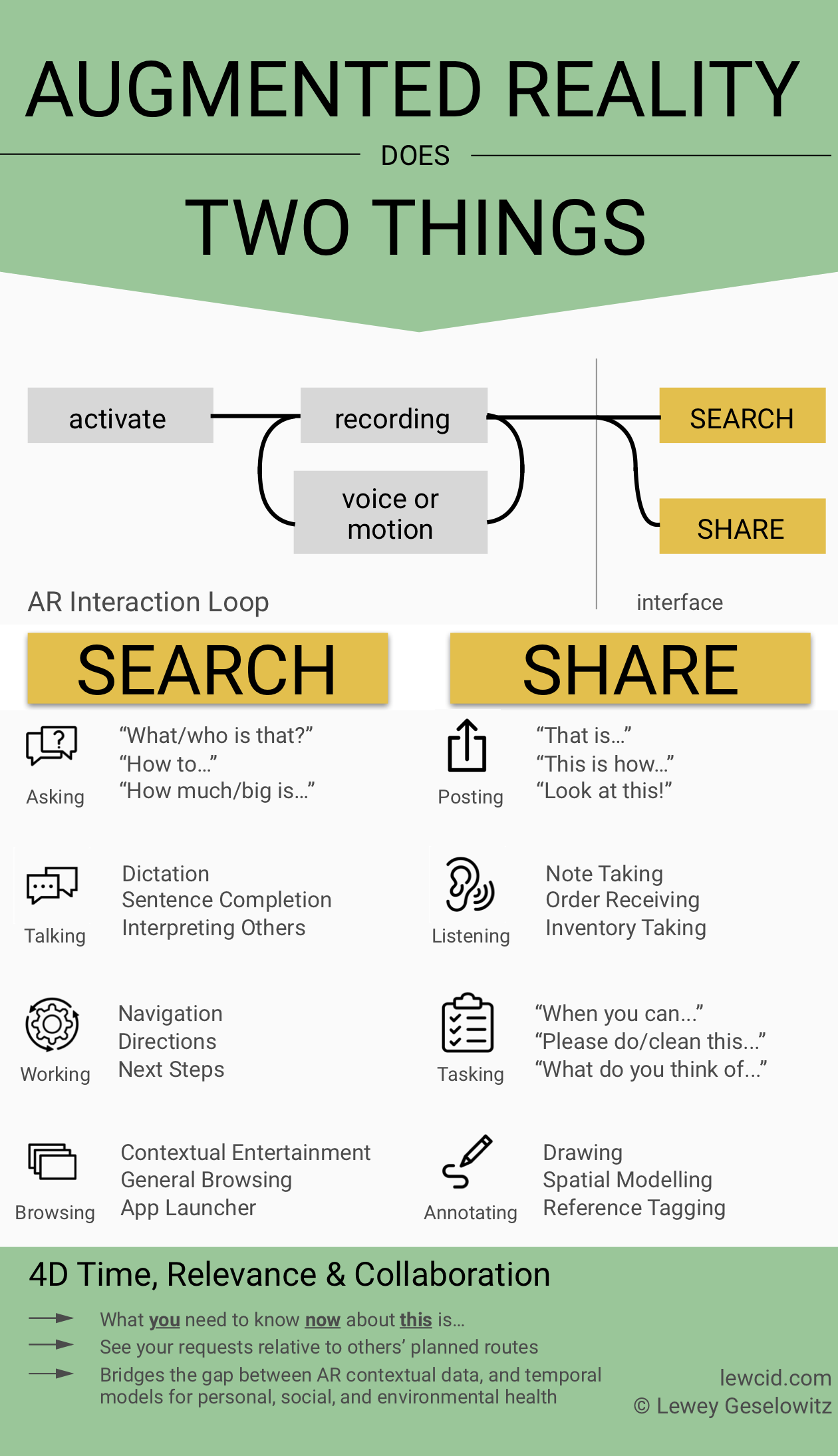

Augmented Reality, which contextualizes information by adding holograms to your view, has two fundamental uses: SEARCHING and SHARING. Because AR uses vision sensors that are continuously and contextually recording and analyzing your world, the use of these systems will then logically focus on either the returned results or the posting of that information for others to see from their own devices. From experience on numerous AR products (HoloLens at Microsoft, Magic-Leap at Lucasfilm, partners for my startup, consultations, etc.), these two functions are what it all boils down to, and how the more successful AR user interfaces will be structured:

A key UX jump here is that “SEARCH” will no longer be a manual process involving just text. It will be the default action of the device in response to all input. Likewise, the default mechanism of expression rather than being abstracted through buttons and controls will be this same recording. You doing your life will be your continuous search query and message to the world. Anyone who interacts with you will become part of your search query, until eventually you’ll be able to literally say: I SEE WHAT YOU MEAN.

Relevance or prioritization of search results, already a huge factor in social media trending, will become more and more important as it weaves into every augmented interaction we make. To really nail this last level of tuning, one has to ask: “What is the ultimate relevance mechanism?” The simplest answer is of course nature itself: we tie into the biological rhythms of people, society and the earth. This “4D” temporal relevance, from the daily circadian body rhythms to business cycles and natural ecosystem cycles, will eventually form a universal relevance for our place in making a peaceful and regenerative world (hence my startup: 4dprocess.com ).

Tune in next time to discuss more on user generated contextual messaging, the art and practicalities of AR UI layout, and the living sculpture of volumetric ecosystem modelling.

Graphic design and edits by UX Researcher Allison Crady